Our Journey to the 6th Kibo-RPC International Round

A introduction to the 6th kibo RPC competition held by JAXA-NASA, some findings and over all experience.

Introduction: A Mission to the ISS

Our engineering team recently took on one of the most unique challenges in the industry: writing code to be executed on the International Space Station. The Kibo Robot Programming Challenge (Kibo-RPC), held by JAXA and NASA, tasks teams with controlling Astrobee, NASA’s cube-shaped autonomous robots, within the "Kibo" Japanese Experiment Module. We became national champion and secured a 4th place in the international round.

.png)

.png)

For more information visit: https://jaxa.krpc.jp/

Technical Objective

The mission required us to develop a system enabling Astrobee to navigate a simulation environment and, eventually, the real ISS. JAXA provided a Java-based API for camera access, flash lighting, and basic locomotion, and we were tasked with building the logic to perform the tasks.

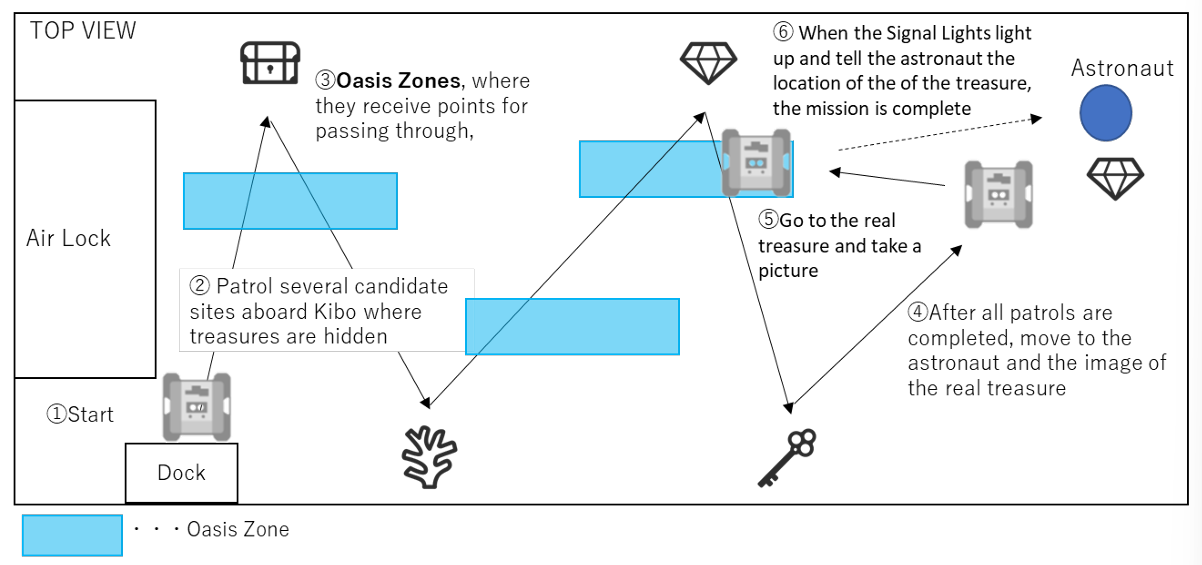

The tasks included:

- Autonomous Navigation: Moving through the Kibo Module while going through specific "bonus zones" for extra points.

- Target Recognition: Identifying pseudo-randomly placed images, determining their types (based on the programming manual), and recording their quantities.

- The "Treasure Hunt": Locating an astronaut holding a placard that revealed the "treasure." We then had to navigate to that specific location and trigger a notification within a precise angle and range.

- Environmental Adaptability: The system had to account for randomized image types, varying positions, and "landmark" decoys meant to distract the sensors.

The Technical Stack: Coding in a "Time Capsule"

Because the Astrobee’s hardware was finalized years before its deployment, we had to work within a specific, older ecosystem to ensure stability on the High-Level Processor (HLP).

- Language & IDE: Java via Android Studio 3.6.3.

- OS: Android 7.1.1 (Nougat).

- ML Framework: YOLOv11 models exported to

.tflite(float16) or.onnx. - Simulation: A Docker-based environment running ROS (Robot Operating System) with RViz for visualization.

- Host Environment: Successfully deployed across Ubuntu (22.04/24.04) and Arch Linux.

The Computer Vision Pipeline: From Raw Image to Data Extraction

Our core challenge was transforming a raw 1280 x 720 navigation feed into an accurate count of landmarks and treasures. Because the object detector operated on tightly cropped patches rather than full frames, maintaining accurate spatial context was critical.

Region-of-Interest Extraction Using ArUco Markers

Accurate spatial context is critical because the object detector operates on tightly cropped patches rather than full 1280×720 frames.

We relied on ArUco markers placed near each area to define the expected field of view. After detecting the required number of markers (usually 1 or 2 depending on the waypoint):

- Compute the marker center and average side length → pixel-to-physical scale

- Estimate marker rotation from ordered corner correspondence

- Apply a fixed geometric transform (derived from rulebook dimensions) to predict the four corners of the target surface in image coordinates, with an added safety margin

- Create a binary mask, rotate the masked image to axis-align the region, then crop and Lanczos-resize to the model’s native 480×480 input

Two margin levels were used:

- Larger margin for cropping/inference (to ensure context reaches the network)

- Tighter margin for post-filtering accepted detections (to reject edge artifacts and reflections)

This two-stage allowance reduced boundary-related false positives while still providing sufficient contextual information to the model.

.png)

.png)

.png)

Motion Stability and Image Sharpness

Even small residual angular rates produce motion blur that severely degrades small-object detection performance.

After each moveTo command, we enforced a stabilization wait:

- Sample robot kinematics at 10 Hz

- Compute magnitude of angular velocity vector

- Require ≥ 5 consecutive samples below 0.001 rad/s (~0.057°/s)

Only after meeting this criterion did we trigger image capture. The 500 ms minimum dwell proved effective at eliminating most post-maneuver blur while remaining compatible with overall timing margins.

Multi-Attempt Positioning with Backup Waypoints

Every major observation waypoint included a backup position and orientation (typically 0.3–0.5 m translation + occasional 180° flip). If the primary location failed to yield the required number of ArUco markers after several acquisition cycles, the robot automatically transitioned to the backup pose.

This simple redundancy increased successful observation rates significantly increasing path length or power consumption.

Overcoming the "Pain Points"

The path to the finals wasn't without its friction. Our team had to navigate several "real-world" engineering hurdles:

- Legacy Dependency Hell: Android 7.1.1 is nearly a decade old. Many required libraries have been wiped from the internet, forcing us to hunt through archived servers and backup repositories.

- Simulation vs. Reality: While we made our setup platform-agnostic (achieving 100% stability on Arch and Ubuntu), we struggled with Docker visualization on macOS and decided to pivot to Linux to save time.

- The Data Gap: With no real-world dataset available, we started with manual annotation on Roboflow, eventually building a semi-automatic pipeline to speed up training as the model improved.

- Iterative Speed: The upload process to the official JAXA simulator was manual and slow. We eventually bypassed this by implementing a Web API to automate our submissions.

Final Thoughts

In space, there is no room for error. Moving from national round to 4th in the world was an incredible journey in balancing legacy hardware with cutting-edge AI.