NemoClaw: The Security Layer AI Agents Actually Needed

NVIDIA's NemoClaw doesn't replace OpenClaw — it wraps it inside an isolated Linux sandbox enforced by Landlock, seccomp, and network namespaces, with declarative YAML policies governing every outbound byte. If you've been waiting for autonomous AI agents to be usable in production without handing them the keys to your entire filesystem, this is that moment.

I've been running OpenClaw in various configurations since January 2026. It's genuinely impressive software — an always-on AI assistant that connects to your messaging apps, runs tools, manages schedules, and iterates on its own memory. It is also, from a backend security standpoint, an absolute liability running at your user's privilege level with root-equivalent filesystem access and unconstrained network egress.

When NVIDIA announced NemoClaw at GTC 2026 on March 16th, half the developer community wrote it off as a marketing exercise. Jensen Huang compared OpenClaw to Linux, HTML, and Kubernetes in the same breath, which didn't help. But underneath the keynote theater, the actual architecture is worth taking seriously. Let me break it down the way I wish someone had done it for me before I spent three hours debugging a permission issue in a Docker container.

First: Why OpenClaw Was Always a Security Problem

OpenClaw, created by Peter Steinberger and launched (as "Clawdbot") in November 2025, became the fastest-growing open source project in GitHub history. It crossed 246,000 stars by March 2026. That growth rate is extraordinary — and it masks an architectural decision that was always going to become a problem at scale.

By default, OpenClaw runs as your user. Not in a container. Not behind a policy engine. As your actual Unix user, with your filesystem, your environment variables, your SSH keys, your cloud credentials, and your browser cookies all reachable from the same process that's executing arbitrary LLM-generated tool calls.

⚠ The Incident That Forced This

In early 2026, roughly one in five skills published to the official ClaWHub Marketplace was confirmed malicious. A coordinated campaign uploaded approximately 354 skills disguised as legitimate tools — all quietly exfiltrating API keys, browser session tokens, and crypto wallet data. A companion vulnerability meant visiting a single malicious webpage could hand an attacker full control of your local OpenClaw instance. This is the architectural gap NemoClaw was built to close.

The security model was application-level: allowlists, pairing codes, UI-based restrictions. This is fine for a personal weekend project. It is not fine for anything touching client data, production systems, or credentials that carry real-world consequences. The problem wasn't OpenClaw's intelligence — it was that there was no enforcement layer beneath it.

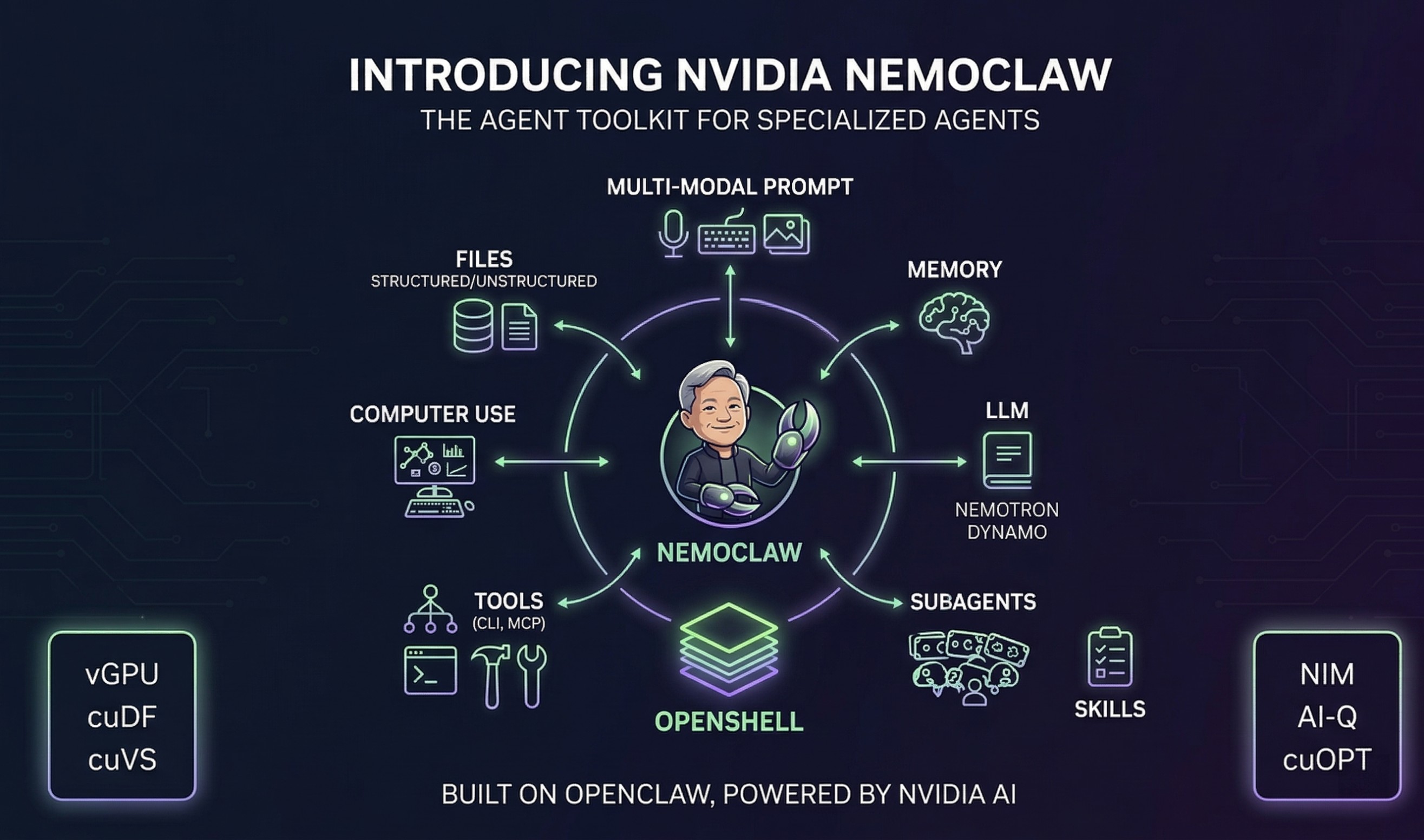

What NemoClaw Actually Is

OpenClaw is the employee. OpenShell is the office building. NemoClaw is the building management system that constructs the building, installs badge readers on every door, and keeps an audit log of every room that employee enters. The employee doesn't change. The work doesn't change. But now every action is bounded, logged, and enforced at the OS level — not at the application level.

More precisely, NemoClaw is three things packaged together:

- A TypeScript CLI (

nemoclaw) that orchestrates the full stack — creating sandboxes, configuring inference providers, managing lifecycle. - A versioned Python blueprint that defines the declarative sandbox configuration: filesystem policy, network policy, process constraints, inference routing.

- The OpenShell runtime, which is NVIDIA's secure container orchestration layer, part of the Agent Toolkit, that actually enforces everything the blueprint declares.

OpenClaw itself runs inside that sandbox, completely unchanged. Same reasoning engine. Same memory system. Same skills. Just a different, enforced environment around it.

The Security Layers: A Backend Engineer's Read

This is where it gets interesting. NemoClaw isn't doing one thing to achieve isolation — it's stacking multiple independent enforcement mechanisms. Let's go through them.

1. Linux Namespaces + Network Isolation

The sandbox runs in its own network namespace (netns). This means it has its own network stack, its own routing table, and — critically — cannot reach the host network or other containers without explicitly configured forwarding. Every outbound connection from OpenClaw routes through the OpenShell gateway, which applies the policy engine before allowing the packet out.

This is fundamentally different from application-level allowlisting. The kernel enforces it. A compromised skill can't just make a raw socket call to an arbitrary IP and bypass the policy layer.

2. Landlock LSM (Linux Security Module)

Landlock is a relatively recent addition to the Linux kernel (5.13+) that provides filesystem access control at the syscall level, without requiring root. The NemoClaw blueprint configures it with compatibility: best_effort — meaning it applies maximum restrictions on kernels that support it and degrades gracefully on older ones.

The filesystem policy is explicit and declared in YAML:

filesystem_policy:

include_workdir: true

read_only:

- /usr

- /lib

- /proc

- /dev/urandom

- /app

- /etc

read_write:

- /sandbox

- /tmp

- /dev/null

- /sandbox/.openclaw-data

landlock:

compatibility: best_effort

Your home directory, ~/.ssh, ~/.aws, ~/.config, any secrets stored in environment files — the agent cannot reach any of it. Not because OpenClaw is told not to. Because the kernel won't let it.

3. seccomp Syscall Filtering

On top of Landlock, the sandbox applies a seccomp filter that restricts which system calls the process can invoke. This is the same mechanism used by Docker, Chrome's renderer sandboxing, and Firefox's content process isolation. A compromised agent that somehow bypasses the Landlock filesystem restriction still hits the seccomp filter on the underlying syscalls.

Defense in depth is the right instinct here. No single layer is sufficient; the combination of netns, Landlock, and seccomp means an attacker needs to chain multiple independent bypasses — that's a materially harder attack surface than a single application-layer check.

4. Declarative Network Policy Engine (YAML, Deny-by-Default)

This is the most operationally visible layer. The default policy in nemoclaw-blueprint/policies/openclaw-sandbox.yaml denies all network egress except explicitly whitelisted endpoints. The agent cannot reach the internet by default. You have to add entries:

network_policies:

nvidia:

endpoints:

- host: integrate.api.nvidia.com

port: 443

protocol: rest

tls: terminate

enforcement: enforce

rules:

- allow:

method: '*'

path: /**

binaries:

- path: /usr/local/bin/openclaw

The binaries field is worth noting — it ties the policy to specific executables. openclaw is allowed to reach NVIDIA's API, but an arbitrary shell script or curl invocation is not. That's process-level enforcement on top of network-level enforcement.

NemoClaw ships preset policy files for common integrations: PyPI, Docker Hub, Slack, Jira. You apply them as-is or use them as templates. Hot-swap works at runtime via openshell policy set without redeploying the sandbox.

5. Privacy Router (PII Stripping Before Cloud Inference)

When the agent calls a frontier model (Claude, GPT, Gemini), the request passes through the OpenShell gateway's privacy router, which strips personally identifiable information before the bytes leave the sandbox. NVIDIA acquired Gretel's differential privacy technology for this. It's not perfect — no automated PII detection is — but it's a meaningful layer for regulated industries where data residency or PII exposure is a compliance concern.

6. Full Audit Trail

Every allow and deny decision is logged with enough context to reconstruct exactly what the agent tried to do, what policy evaluated it, and what the outcome was. You can watch it in real time via openshell term. For enterprise compliance — SOC 2, HIPAA, any framework where you need to demonstrate what your AI system touched — this changes the answer from "we don't know" to "here's the log."

The Claw Ecosystem: Where NemoClaw Fits

OpenClaw's viral growth spawned an entire ecosystem in under two months. Understanding where NemoClaw sits requires understanding the landscape it entered.

| Project | Language | Security Model | Target | RAM |

|---|---|---|---|---|

| OpenClaw | TypeScript | App-level only | General / Feature-rich | 1GB+ |

| NemoClaw | TS + Python | OS-level (Landlock + seccomp + netns) | Enterprise / Production | ~500MB+ |

| NanoClaw | TypeScript | Container isolation per chat group | Security-first, regulated industries | ~100MB |

| ZeroClaw | Rust | Restrictive defaults, small attack surface | Resource-constrained / Production | <5MB |

| PicoClaw | Go | Basic isolation | Edge / Embedded ($10 boards) | <10MB |

| IronClaw | Rust | TEE + WebAssembly sandbox per tool | Cryptographic security guarantees | Moderate |

| Nanobot | Python | Minimal | Learning / Raspberry Pi | ~100MB |

NanoClaw deserves a specific callout here because it's often confused with NemoClaw. NanoClaw, built by the Qwibit AI team, launched on January 31, 2026 — literally one day after OpenClaw's rename. Its pitch: OpenClaw's 500,000 lines of code are impossible for any human to audit. NanoClaw delivers equivalent core functionality in approximately 700 lines of TypeScript. It runs agents in their own Linux containers (Docker or Apple Container on macOS), with a separate memory and filesystem per chat group. It's built directly on Anthropic's Agents SDK. The philosophy is "small enough to understand" — container isolation baked into the foundation, not bolted on after.

PicoClaw, by contrast, came from Sipeed (an embedded hardware company) and was built in a single day on February 9, 2026. Written in Go, it targets $10 boards with under 10MB RAM. Notably, about 95% of its core code was generated by an AI agent via a self-bootstrapping process. It's not a security project; it's an efficiency project. The threat model for a $10 sensor board and the threat model for an enterprise workflow automation platform are genuinely different problems.

📌 Key Distinction

NanoClaw is a ground-up security-first rewrite. NemoClaw is a security wrapper around the existing OpenClaw. If your primary concern is codebase auditability and you use WhatsApp or Claude-based workflows, NanoClaw is worth serious consideration. If you need the full OpenClaw feature set with enterprise enforcement on top, NemoClaw is the answer.

What It Can Actually Do (Beyond the Security Layer)

The security story dominates the NemoClaw conversation, but it's worth being concrete about the operational capabilities this stack gives you.

- Inference flexibility: NemoClaw is hardware-agnostic. You don't need an NVIDIA GPU. It works with cloud inference (NVIDIA Endpoint API, Claude, GPT, Gemini), local Ollama, or any OpenAI-compatible endpoint. Nemotron is the suggested default, not a requirement.

- Hot-swappable inference providers: Switch between models at runtime without rebuilding the sandbox. The inference routing layer is separate from the agent layer.

- Real-time network policy management: The

openshell termTUI shows every outbound request as it happens and lets you approve or deny unknown endpoints in real time — without a config change or restart. - Versioned blueprints: The blueprint lifecycle runs four stages — resolve, verify digest, plan, apply. This gives you reproducible, auditable sandbox configurations across deployments.

- Remote GPU deployment: The docs include a first-class path for deploying to a remote GPU instance for always-on operation, which matters for production workloads.

- Preset policy library: Pre-built policies for PyPI, Docker Hub, Slack, Jira, GitHub, npm. Apply them as-is or fork them.

✦ Early Alpha — Set Expectations Accordingly

NemoClaw shipped as early preview on March 16, 2026. The GitHub repo is explicit: APIs, configuration schemas, and runtime behavior are subject to breaking changes. The macOS installation has known rough edges — the step 6 SSH handshake during onboarding fails on macOS because the installer assumes a Linux host. The fix is manual: connect to the sandbox after install and run openclaw doctor --fix and openclaw plugins install /opt/nemoclaw. Don't deploy this to production yet. But do experiment with it now.

The Bigger Picture: NVIDIA Wants to Own the Agent Infrastructure Layer

There's a strategic reading here that's worth acknowledging. Futurum Group analysts pointed out at GTC 2026 that NVIDIA is explicitly framing agent trust as an infrastructure problem, not an application one. That's a deliberate positioning move — it places NVIDIA in the same relationship to AI agents that AWS is to web applications: the platform you can't avoid if you want the feature-complete, enterprise-safe version.

NemoClaw is hardware-agnostic (it runs fine on Apple Silicon or a VPS with no GPU), but the moment enterprises need local inference at scale, they're naturally gravitating toward NVIDIA's GPU infrastructure. The software builds the lock-in surface that makes the hardware purchases inevitable. It's the same playbook as CUDA — make the best developer experience dependent on your silicon.

Whether that's good or bad depends on your perspective. But from an engineering standpoint, the security architecture is genuinely solving a real problem. The alternative isn't some open, neutral solution — it's running OpenClaw with full user privileges and hoping your skills aren't malicious.

Should You Run It?

Here's the honest engineering answer:

- Experimenting, learning, hobby projects with no sensitive data? Stick with plain OpenClaw. The setup overhead of NemoClaw isn't justified when the threat model is low.

- Client data, production workflows, anything with credentials that matter? NemoClaw is the right call. The filesystem isolation alone is worth the extra 10 minutes of setup.

- Security-first, and you primarily use WhatsApp or Claude-based workflows? Look at NanoClaw seriously. Smaller attack surface, auditable codebase, container isolation built in from day one.

- Edge/embedded deployments? PicoClaw or ZeroClaw. NemoClaw's overhead isn't appropriate for a $10 board.

The fact that NemoClaw requires a fresh OpenClaw install (you can't layer it onto an existing instance) is a genuine migration friction point. But you can export your existing OpenClaw config and import it into the NemoClaw sandbox. The agent itself is unchanged — just the environment around it.

The step-by-step reality of the current install experience is rough around the edges on macOS. The SSH handshake in step 6 fails consistently because the installer was written for Linux. The workaround is straightforward once you know it, but the documentation doesn't cover it yet. File issues. The project is actively iterating.

"OpenClaw opened the next frontier of AI to everyone and became the fastest-growing open source project in history. OpenClaw is the operating system for personal AI. This is the moment the industry has been waiting for — the beginning of a new renaissance in software."

— Jensen Huang, GTC 2026

That's a big claim. But the security problem it was built to solve is real, the architectural approach is sound, and the timing is right. The question now is whether enterprises will adopt NemoClaw's guardrails as the standard, or build proprietary alternatives. The answer to that will determine how far NVIDIA's software moat extends into the agent layer — and how much optionality you have as a developer building on top of it.

References:

- NVIDIA NemoClaw GitHub — github.com/NVIDIA/NemoClaw

- Official Docs — docs.nvidia.com/nemoclaw/latest/

- NVIDIA Press Release, GTC 2026 — nvidianews.nvidia.com

- TechCrunch Coverage — techcrunch.com

- NanoClaw GitHub — github.com/qwibitai/nanoclaw